The $1 Trillion Question

The $1 Trillion Question, Mortality in the Age of Generative Ghosts, Your Mind and AI, How to Design AI Tutors for Learning, The Imagining Summit Preview: Adam Cutler, and Helen's Book of the Week.

Defined as “the degree to which a system can adaptably achieve complex goals in complex environments with limited direct supervision", agentic AI promises to transform how we make decisions.

Personal generative AI that is able to act as a personal agent will redefine how we use the web and applications. While we are still a long way from having fully agentic AI, the commercial direction is clear. Google’s Gemini gives us early glimpses of how personal, dynamic, multimodal autonomous agents will fundamentally alter how we access and assess information, consider options and make decisions. Open AI has been clear about how it sees the future of GPTs as evolving more agentic capabilities.

Agentic AI actively achieves complex goals in diverse environments with limited supervision. Self-driving vehicles are a type of agentic AI. Tesla's Autopilot, probably the most well-known example, has more agency than simple driver assistance technologies like adaptive cruise control, but doesn't fully match the advanced systems that can detect and respond to the environment, such as autonomously accelerating to overtake slower vehicles.

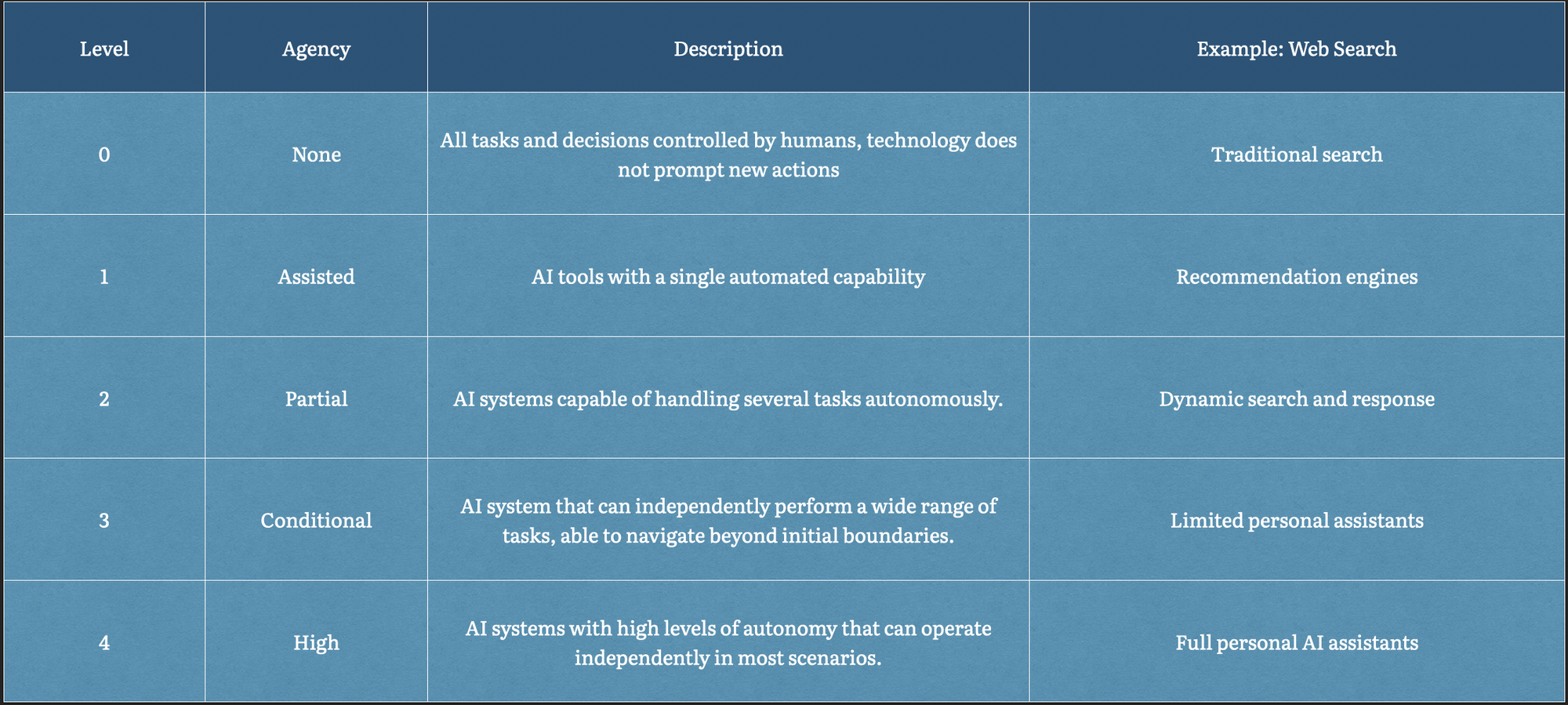

We propose using a level system that is analogous to that developed for self-driving cars.

But agentic AI in a purely digital, online environment isn’t something we’ve seen yet, despite it being a bit of a holy grail in the AI world. OpenAI views agenticness as a "prerequisite for some of the wider systemic impacts that many expect from the diffusion of AI" and an "impact multiplier" of the entire field of AI.

We think agentic AI is about the ambition to automate human decision making processes and that it will reshape our cognitive landscape in profound ways. By viewing agenticness as a disruptor of how we make decisions, we can see what makes it so difficult.

The Artificiality Weekend Briefing: About AI, Not Written by AI